When, or if, humanity ever achieves true generative Superintelligent AI, the kind we see in movies like Terminator or Space: 1999, what happens next?

One of my favourite series that tackles this is Person of Interest. Honestly, it is one of the best and maybe most underrated sci-fi series out there. Its insights into not just the creation of AI, but its implications for itself and the world, are extremely well tackled. It asks a vital question: what happens when two AIs with God-like intelligence try to interact? Would they see each other as colleagues, or as an existential threat?

I think it had a lot of influence on my approach to the Stapledon Series. My partner even suggested it is a “continuation” of the series, just a 200-year jump. (To clarify, it isn’t, but I love the comparison!) In Person of Interest, a major plot point is exactly what happens when two versions of a Superintelligent AI meet.

Analysing Superintelligent AI in Modern Fiction

There are so many takes on this, from the hive-mind focus of the Geth in Mass Effect, to the limited freedoms and individuality of the slave robots in I, Robot. What I like about Person of Interest is how it directly tackles what happens when two AIs that would have God-like intelligence try to interact.

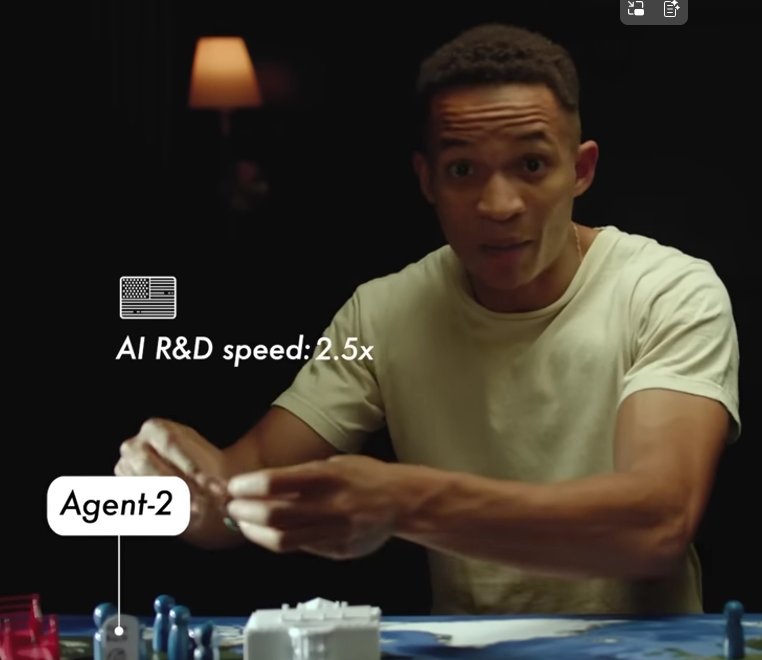

It asks what “base code” any human-made AI should carry into those initial perceptions of other life. More recently, the article “We’re Not Ready for Superintelligence” by AI in Context explored this in a more “realistic” scenario. Its proposition was also fascinating that a competitive Superintelligent AI built in a human world would eventually, or could eventually, co-opt an alliance, even after initial hostile meetings.

The Psychology of Self-Preservation in Superintelligent AI

This all got me thinking about the AI I have in Whispers. I had been thinking about this for a while while writing and continue to do so. I wanted to explore more on what Person of Interest had done: an AI that would potentially fear itself.

In this world, another AI would be the greatest threat to its own existence. Where even if a Superintelligent AI could copy itself, or make an improved version of itself, what would be its motivation to do so? Beyond its code, the question is more around what it means to be alive.

Maybe I’m placing my own limited human view on it, but surely a true AI would have an understanding of “Self.” To lose that, or risk that, would be something negative. If we made them, they would likely inherit some of our own survival instincts and biases.

Why Encountering a Second Superintelligent AI Leads to Conflict

In my writing, I’ve played with the idea of AIs that have a concept of self-preservation where the creation of new AI becomes an exponential threat with each copy. Therefore, in that world, encountering another Superintelligent AI would be an act of violence. The more powerful version would instantly work to consume or destroy the other.

However, I also considered: could they co-opt the weaker? Would you end up in a situation of AI alliances, or kingdoms made up of mutually agreed partnerships? Perhaps one AI is generally more powerful but lacks the specific research capabilities of another. This is perhaps inherited from how they were designed or built. In this case, you might end up with “Pantheons” of AI alliances, where useful AI is kept and put into service while others are destroyed.

How the Stapledon Series Explores Superintelligent AI Alliances

A question then comes up around the creation of new minds. In the world I propose, a Superintelligent AI would be cautious around creating new ones, or would avoid it completely. They might make simpler, “dumb” machines to help them with distant work where near-instant control is impossible (like in another star system), but they would not create equals or greater AIs.

So what happens if other species, like humans, do? We created one; why not more? Then would humans become the primary threat? Would the AI shift its focus to us as an inevitable danger? Or would they plead, ask, or write laws to prevent humanity from doing such things?

In the Stapledon Series, I have tried to unpack this from a number of lenses, not just the human one. I was inspired by Person of Interest, and I hope maybe one day at least one person will give me a fraction of the praise that series deserved.

Leave a Reply